Are you ready to take your marketing efforts to the next level?

Imagine a world where you could effortlessly uncover the most effective strategies to captivate your audience and drive better results. Well, the wait is over. Enter the game-changing technique known as split testing.

In this exciting guide, we’ll explore how split testing empowers you to compare different versions of your content and unlock insights that make a real impact. From emails that get opened to landing pages that convert, split testing holds the key to maximizing your marketing success.

Join us as we delve into the world of data-driven decision-making and discover the simple yet powerful art of split testing. Get ready to supercharge your marketing efforts and achieve remarkable results like never before. The future of your marketing success starts here!

What is split testing?

Split testing, also known as A/B testing, is a method used in marketing and experimentation to compare two or more versions of a webpage, email, advertisement, or other digital assets to determine which one performs better in achieving a desired outcome. The goal of split testing is to gather data and insights to make data-driven decisions and optimize the performance of marketing campaigns or user experiences.

Also read: 5 Top Link Rotators: Convert More With Traffic Routing & A/B Testing

AB testing vs split testing: What’s the difference?

No, there is no substantial difference between A/B testing and split testing. In fact, the terms “A/B testing” and “split testing” are often used interchangeably and refer to the same concept. Both involve comparing two or more variations of a webpage, email, or another marketing element to determine which version performs better in achieving a specific goal.

Whether you refer to it as A/B testing or split testing, the underlying principle remains the same: conducting controlled experiments to optimize marketing efforts through data-driven decision-making.

Also read: How LinkedIn Retargeting Ads Work – A Complete Guide

How does split testing work?

Here’s how split testing typically works:

Identify the goal:

Clearly define the objective of the test. It could be increasing click-through rates, improving conversion rates, or any other specific metric you want to improve.

Create variants:

Develop multiple versions (variants) of the element you want to test. For example, if you’re testing a webpage, you may create two versions with different headlines, layouts, or call-to-action buttons. These variants should differ in one specific aspect (e.g., color, text, placement) while keeping other elements consistent.

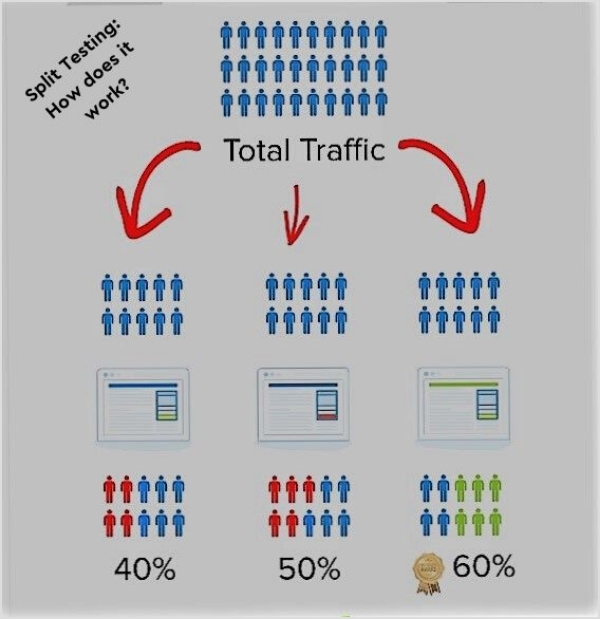

Split traffic:

Direct a portion of your target audience or website visitors to each variant. This is usually done randomly or based on predetermined rules, ensuring a statistically significant sample size for accurate results.

Collect data:

As users interact with the different variants, data is collected on various metrics, such as click-through rates, conversion rates, bounce rates, or time spent on the page. This data helps you compare the performance of the variants and determine which one is more effective.

Also read: 7 Ways to Increase CTR for Better Conversion on the Website

Analyze results:

Use statistical analysis to evaluate the data and determine the significance of any observed differences between the variants. Statistical significance helps ensure that the results are not due to chance.

Implement the winning variant:

Based on the results, identify the variant that performed better and implement it as the default option or apply the insights gained to further optimize your marketing or user experience.

Repeat and iterate:

Split testing is an iterative process. Once you implement the winning variant, you can continue to test new variations, further refining your approach and continuously improving performance.

Split testing allows you to make informed decisions based on empirical evidence rather than relying on assumptions or guesswork. By testing different variations, you can understand what resonates best with your audience and make data-backed improvements to achieve your marketing goals.

Also read: From Likes to Love: Comprehensive Social Media Engagement Guide

6 Best split testing tools to use in 2023:

Here are some of the best split-testing tools to use in 2023:

- Google Optimize:

Google Optimize is a free tool by Google that integrates seamlessly with Google Analytics. It offers A/B testing and personalization features, making it a versatile choice for small to medium-sized businesses.

- Optimizely:

Optimizely is a powerful and widely-used split testing platform. It offers a range of features, including A/B testing, multivariate testing, and personalization. Optimizely provides a user-friendly interface and robust reporting capabilities.

Also read: Unleashing Sales Success: The Best Sales Prospecting Tools of 2023

- VWO (Visual Website Optimizer):

VWO is a comprehensive split testing and conversion optimization platform. It offers A/B testing, multivariate testing, heatmaps, and user behavior tracking. VWO provides an intuitive visual editor and detailed reporting.

- Replug:

One of Replug’s standout features is A/B Testing, which allows users to experiment with different versions of their links to determine the most effective option. With Replug’s A/B Testing, users can create multiple variations of destination links, assign weights to each version, and analyze which performs best.

This data-driven approach helps improve click-through rates, conversions, and overall campaign success.

Also read: 6 ways brands can use Replug to strengthen the customer’s journey

- Adobe Target:

Adobe Target is a robust split testing and personalization tool. It integrates well with other Adobe Marketing Cloud products and offers advanced targeting and segmentation options. Adobe Target is suitable for large enterprises with complex testing requirements.

- Unbounce:

Unbounce is primarily a landing page builder but also includes split testing functionality. It allows you to create and test different variations of landing pages to optimize conversions. Unbounce offers an intuitive drag-and-drop editor and solid analytics.

Remember to assess each tool based on your specific requirements, such as ease of use, integrations, statistical significance calculations, and cost. It’s also a good practice to explore customer reviews, request demos or trials, and consider the level of support provided by each tool before making a decision.

Also read: Campaign Tracking: Data-Driven Tools for Marketers

Split testing best practices:

When conducting split testing, it’s important to follow some best practices to ensure accurate results and make informed decisions. Here are some key split testing best practices to keep in mind:

1. Clearly define your goals:

Clearly outline the specific metrics or outcomes you want to improve through split testing. This will help you focus your efforts and measure the success of your experiments accurately.

2. Test one variable at a time:

To pinpoint the impact of individual changes, isolate and test one variable at a time. Changing multiple elements simultaneously can make it difficult to attribute the results to a specific factor.

3. Define a sample size and duration:

Determine the appropriate sample size and duration for your split tests. A larger sample size reduces the margin of error, while longer test durations account for potential variations over time.

Also read: A/B Testing Benefits, Examples & Other Factors To Consider

4. Randomize test groups:

Ensure that the distribution of test variations is randomized to minimize bias. Randomizing helps ensure that any differences in performance between variations are due to the changes being tested, rather than external factors.

5. Monitor statistical significance:

Use statistical significance calculations to determine if the observed differences in performance between variations are statistically significant. This helps you make confident decisions based on reliable data.

6. Segment your audience:

Consider segmenting your audience to gain insights into how different groups respond to variations. This can help you tailor your strategies and improve targeting based on specific user characteristics or behaviors.

7. Iterate and learn:

Split testing is an iterative process. Continuously analyze and learn from the results of your experiments. Use the insights gained to refine your strategies and implement further optimizations.

Also read: Starter’s Guide to Setup Facebook Custom Audience

8. Document and track changes:

Keep a record of the changes made during split testing, including the variations tested, results, and any insights gained. This documentation will help you learn from past experiments and inform future testing strategies.

9. Test on different devices and platforms:

Ensure that your split tests are conducted across various devices, browsers, and platforms to account for differences in user experiences. This helps validate the performance of variations across different user environments.

10. Consider long-term impact:

Remember to assess the long-term impact of changes made through split testing. Some variations may produce short-term gains but may not be sustainable or have a positive impact in the long run.

Also read: Top 22 Personal Branding Tools To Upscale Your Marketing

FAQs

Why is split testing important in marketing?

Split testing is important because it allows you to make informed decisions based on actual data rather than assumptions. It helps you understand what resonates best with your audience, improve conversion rates, increase engagement, and ultimately maximize your marketing efforts.

What are the benefits of split testing?

Split testing provides several benefits, including identifying the most effective marketing strategies, optimizing conversion rates, improving user experience, increasing customer engagement, reducing bounce rates, and driving better overall campaign performance.

How can split testing improve conversion rates?

Split testing allows you to test different elements such as headlines, call-to-action buttons, visuals, layouts, or offers to determine which versions generate the highest conversion rates. By optimizing these elements based on data-driven insights, you can improve the effectiveness of your marketing campaigns and increase conversions.

What are some common elements to split tests?

Common elements to split test include headlines, copywriting, images, colors, call-to-action buttons, pricing, page layouts, forms, subject lines, and email templates. These elements can have a significant impact on user engagement and conversion rates.

How do you determine the sample size for a split test?

The sample size for a split test depends on various factors such as your desired level of statistical significance, the expected effect size, and the variability of your data. There are online calculators and statistical tools available that can help you determine an appropriate sample size.

What is statistical significance in split testing?

Statistical significance in split testing refers to the likelihood that the observed differences in performance between variations are not due to random chance. It helps determine if the differences you observe are statistically reliable and can guide you in making confident decisions based on the results.

How long should a split test run for?

The duration of a split test depends on factors like your traffic volume, the expected effect size, and the level of statistical significance you want to achieve. It is generally recommended to run a split test for a long enough duration to capture a representative sample size and to account for any variations over time.

What are some best practices for conducting split tests?

Best practices for split testing include clearly defining goals, testing one variable at a time, randomizing test groups, monitoring statistical significance, segmenting your audience, iterating and learning from results, and documenting changes for future reference.

What tools or platforms can I use for split testing?

There are several split testing tools available, such as Google Optimize, Optimizely, VWO (Visual Website Optimizer), Adobe Target, Convert, Unbounce, and Replug. These tools provide user-friendly interfaces, statistical analysis, and reporting capabilities to conduct split tests effectively.

Can split testing be used for different marketing channels, such as email marketing or paid advertising?

Yes, split testing can be applied to various marketing channels. For email marketing, you can test different subject lines, email content, or send times. In paid advertising, you can test different ad copy, visuals, targeting options, or landing pages. Split testing can be adapted to suit different marketing channels and optimize.

You may also like:

How to Set Up Retargeting on LinkedIn – Step-by-Step Guide

How to get to the link in the bio on Instagram?

Easy Guide For Fixing URL Blacklist